Your goal is to produce automated labels that would have an mIoU of at least 0.6 with the human-annotated labels, had you also gotten human annotations on corresponding images. A mIoU of 1 means that the two sets of bounding boxes overlap perfectly. An mIoU of 0 means that there is no overlap between two sets of bounding boxes.

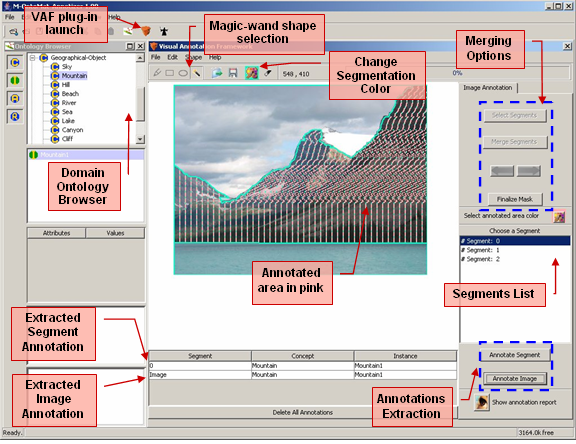

For this exercise, use the mean Intersection over Union (mIoU). The following diagram shows the process:īecause automated labeling involves comparing human-annotated labels to labels produced by machine learning, you need to choose a measure of bounding box quality. Now that it has small training and validation datasets, Ground Truth is ready to train the algorithm that later produces automated labels. For more information, see the notebook and the Amazon SageMaker Developer Guide. Throughout the process, Ground Truth consolidates each label from multiple human-annotated labels to avoid single-annotator bias. Iteration 3 created a training dataset by obtaining human-annotated labels on 200 more randomly chosen images. It’s used later by a supervised machine learning algorithm to produce automated labels. On iteration 2, Mechanical Turk workers annotated another 190 randomly chosen images. This batch validates the end-to-end execution of the labeling task. On iteration 1, Mechanical Turk workers annotated a small test batch of 10 randomly chosen images. The following graph shows the number of images (abbreviated ‘ims’ in the plot) produced on each iteration and the number of bounding boxes in these images. In each iteration, Ground Truth sent out a batch of images to Amazon Mechanical Turk annotators. The plots show that annotating the whole dataset took five iterations. Active learning and automated data labeling To understand how Ground Truth annotates data, let’s look at some of the plots in detail. This produces a lot of information in plot form. When it’s done, run all of the cells in the “Analyze Ground Truth labeling job results” and “Compare Ground Truth results to standard labels” sections. Submits the annotation job request to Ground Truth.Creates an object detection annotation job request.Creates object detection instructions for human annotators.Creates a dataset with 1,000 images of birds.You need to modify some of the cells, so read the notebook instructions carefully. Run all of the cells in the “Introduction” and “Run a Ground Truth labeling job” sections of the notebook. On Step 3, make sure to mark “Any S3 bucket” when you create the IAM role! Open the Jupyter notebook, choose the SageMaker Examples tab, and launch object_detection_tutorial.ipynb, as follows. You can follow this step-by-step tutorial to set up an instance. To access the demo notebook, start an Amazon SageMaker notebook instance using an ml.m4.xlarge instance type. Note: The cost of running the demo notebook is about $200. To show how, we use an Amazon SageMaker Jupyter notebook that uses the API to produce bounding box annotations for 1000 images of birds. For finer control over the process, you can use the API. In a previous blog post, Julien Simon described how to run a data labeling job using the AWS Management Console. Run an object detection job with automated data labeling This post explains how automated data labeling works and how to evaluate its results. To decrease labeling costs, use Ground Truth machine learning to choose “difficult” images that require human annotation and “easy” images that can be automatically labeled with machine learning. With Amazon SageMaker Ground Truth, you can easily and inexpensively build more accurately labeled machine learning datasets.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed